Project Kinect for Azure combines AI and Microsoft's next-gen depth camera

Kinect's legacy lives on.

Kinect, as it existed for Xbox, may be dead, but its legacy continues to live on. Speaking at its Build 2018 developer conference, Microsoft announced Project Kinect for Azure, a tool meant to help developers work on new ambient computing applications.

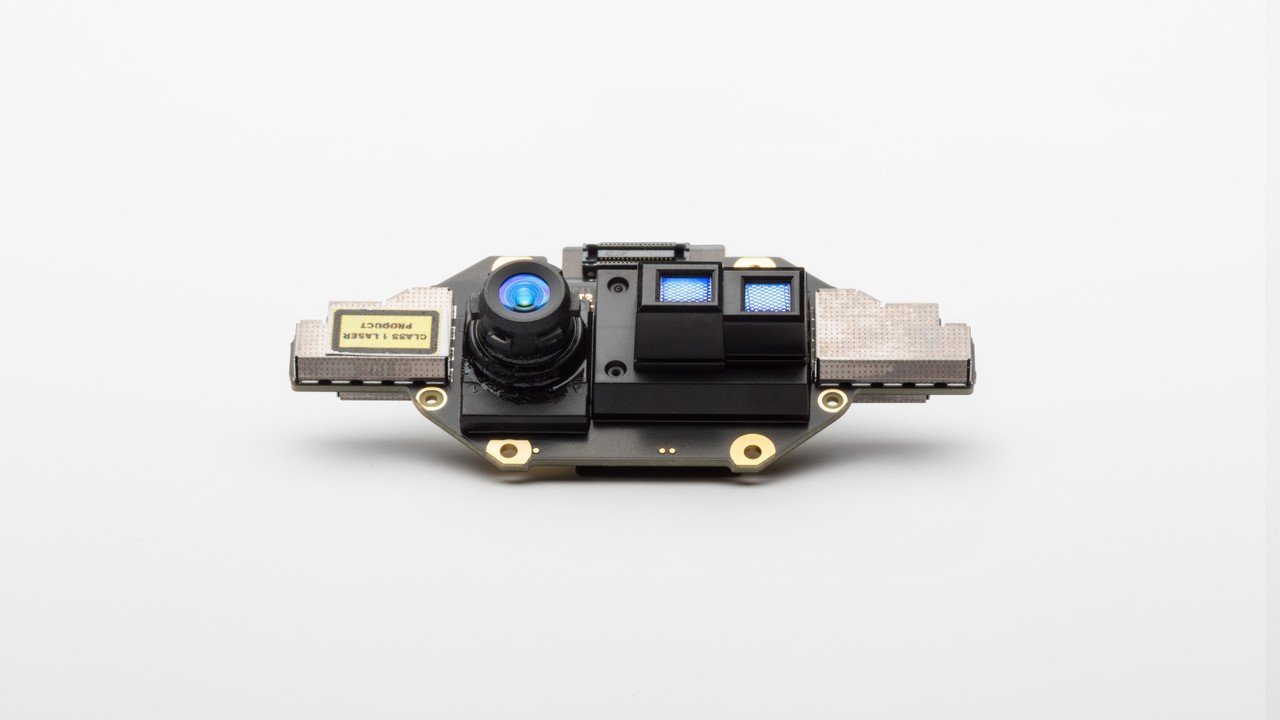

Microsoft hasn't provided a ton of information on Project Kinect for Azure yet, but what it has looks interesting. The package is based on the company's "next generation" depth camera, and it packs onboard computing capabilities built to run Azure AI services. In combination with Microsoft's Time of Flight sensor and additional sensors, Project Kinect for Azure can be used for hand tracking, high fidelity spacial mapping, and more. As a bonus, the whole thing is small and power efficient, Microsoft says.

In a blog post today, Microsoft technical fellow and HoloLens inventor Alex Kipman said:

The technical breakthroughs in our time-of-flight (ToF) depth-sensor mean that intelligent edge devices can ascertain greater precision with less power consumption. There are additional benefits to the combination of depth-sensor data and AI. Doing deep learning on depth images can lead to dramatically smaller networks needed for the same quality outcome. This results in much cheaper-to-deploy AI algorithms and a more intelligent edge.

Kipman also confirmed that the Project Kinect sensor bundle will be used with the next version of HoloLens. Project Kinect will bring new capabilities to HoloLens, replacing the third-generation Kinect depth sensor used in the current HoloLens.

Though Kinect may have received the ax earlier this year, the tech behind it continues to serve as the basis for a range of Microsoft products. Microsoft's HoloLens has certainly benefited from the depth sensing work done with Kinect, as has Windows Mixed Reality as a whole. It's not surprising to see Microsoft would look to extend Kinect further as it focuses on AI and edge computing. What will be interesting is to see what kinds of solutions developers will come up with using Project Kinect for Azure.

All the latest news, reviews, and guides for Windows and Xbox diehards.

Dan Thorp-Lancaster is the former Editor-in-Chief of Windows Central. He began working with Windows Central, Android Central, and iMore as a news writer in 2014 and is obsessed with tech of all sorts. You can follow Dan on Twitter @DthorpL and Instagram @heyitsdtl.

Windows Central Insider

Windows Central Insider