Top 5 worst video game design mistakes

From the Atari 2600's temperamental controllers of the to the Xbox One's stereoscopic rumble triggers, gaming has certainly come a long way - but it's not all good news.

In this article, I'm going to sound off some of the most annoying game design mistakes that, despite piles of feedback from gamers all over the world, still seem to crop up.

There are probably hundreds of game design flaws that manage to slip into our games, sometimes with the best intentions, but here are my personal five most reviled.

Forced multiplayer

Forced multiplayer is one of my pet peeves. This gripe extends to both co-op and separate multiplayer experiences that dilute an existing franchise known for its single player strengths.

Dead Space 3 is a stark example of how forced multiplayer can help kill a franchise. Dead Space came about at a time when triple-A horror was on the ropes, giving us a compromised but memorable action horror. Dead Space 2 built on the first, trading tension for shock value and spectacle, but was still solid overall and achieved decent sales. The second game had a Left 4 Dead-like multiplayer mode, which offered decent laughs for one or two sessions. I don't think anybody expected it to achieve any traction, but it was inoffensive.

I can't imagine the uninspired multiplayer in Dead Space 2 led to increased sales (and decreased re-sales), but within lies the theory. Multiplayer in theory increases the life expectancy of a game, extending that period before a sale becomes a trade-in. There's a solid business argument for the practice, as used game sales eat away at a publisher's profits. But what EA did with Dead Space 3 has earned it a place on this list as a primary example of how not to haphazardly slap multiplayer into every franchise.

Co-op? In a horror game? Part of the core series? Not a spin-off? Not a separate mode? Really EA?

Like Resident Evil 5 before it, EA pulled the multiplayer trigger drawing the ire of fans everywhere. The difference between Resident Evil and Dead Space is that Resident Evil is a well-established brand and probably has several more shallow releases in it before people lose faith completely. Co-operative play drained the atmosphere from Dead Space 3 like a vampire and joined a catalogue of other terrible design decisions leading to the game's commercial failure and Dead Space's general hiatus.

Get the Windows Central Newsletter

All the latest news, reviews, and guides for Windows and Xbox diehards.

The co-op wasn't Dead Space 3's only problem, not by a long shot, but it was certainly central to my loss of interest. Fear for used games sales has led to all sorts of shallow multiplayer components finding themselves forcibly grafted on to our favourite franchises. The Tomb Raider reboot's multiplayer is a contemporary example of shovelling a competitive death match mode into an engine that wasn't designed for PVP. Assassin's Creed has been obscuring the feel of the franchise for a while with chaotic multiplayer modes, which has finally led to Ubisoft (rightfully) dropping multiplayer all together for Assassin's Creed Syndicate.

It's hard to place the blame firmly on the publishers, who find their margins squeezed by used game vendors. There is simply a right way to go about it. Mass Effect 3 and Dragon Age Inquisition are great examples of games that did multiplayer modes correctly. Not only do they fit into the context of the single player campaign, but they've both enjoyed waves of free content to keep them fresh over time.

If tacked on multiplayer is a by-product of used games sales, they should be at least half-way decent. Otherwise, it just feels as though the money could've been spent better elsewhere.

Shallow side-quests

Open world games have subscribed to the bigger is better mantra. As a result, they increasingly seem to struggle to populate their ballooning world sizes with worthwhile content. Despite that there are games out there with larger land masses than Skyrim, it remains the baseline measure for how large an open world should be. Not only did Skyrim have a huge overworld, it was also littered with handcrafted caves, forts, dungeons, towers and all sorts of complex structures that added to a grand sense of adventure. Other games have matched Skyrim for detail as well, but the story doesn't end there.

What do you put into your huge and detailed open world once it's built? If we're using Dragon Age Inquisition as an example, you fill your beautiful game world with mind-numbing context-devoid fetch quests that you might imagine are far beneath your hero's remit.

The issue is not that these quests exist, often it's that they so lack in context or relevance that it slaps you out of the game. Grind settles in, immersion peels back and suddenly you're bored - something a video game should never be.

The Witcher 3: Wild Hunt is so impossibly vast, and I've yet to come across a quest that felt out of place. Even the most minor "hunt this monster" quest draws on the narrative of other quests in the area. The quests coalesce to give entire populations of villager NPCs their unique place in the game's world. Side quests should be treated with the same dignity as the main story quests, rather than shoved in haphazardly to fill a quota. Hopefully, The Witcher 3 will help put the practice to sleep.

Premature releases

Console generation transitions seem complicated. While the PS4 and Xbox One still have small install bases, publishers end up splitting title development across PS3, PS4, Xbox One, Xbox 360 and PC to accommodate. Each platform has different architecture, hardware features, software features and certification processes, which probably lead to some of the issues I'll describe in this segment.

Battlefield 4 was a hot mess at launch. Disconnection, game crashes, graphical oddities, rife net code problems and other systemic problems marred what would've been a major new gen celebration. EA and DICE put production of the game's DLC on hold to repair the issues, which were still present months after launch. Hop on to Battlefield 4 now and you'll have a pleasant experience, but this was certainly not the case back in 2013.

How could such a thing happen? Doesn't one of the world's largest publishers test their major flagship franchises before launch?

During an investor's call several months after launch, EA CEO Patrick Soderlund described the state of Battlefield 4:

..."When Battlefield 4 launched, it was a very complex game, launching on two entirely new console platforms, as well as current-gen and PC. [...] Based on our pre-launch testing and beta performance, we were confident the game was ready when it was launched. Shortly after launch, however, we began hearing about problems from our player community, and the development team quickly began to address the situation."...

It certainly seems that EA has rectified its internal mechanisms for quality control ahead of Battlefield Hardline, which has enjoyed a solid launch. Lessons other developers should have learned from BF4's launch plight apparently went unnoticed, as we've seen from the troubled launches of Halo: The Master Chief Collection and Assassin's Creed Unity.

Halo: MCC should have been a flagship entity for the Xbox One going into the holiday season last year. But the ambitious cross-title multiplayer system simply did not work, rendering matchmaking largely impossible during the entirety of the holiday period.

Multiplayer focussed Assassin's Creed Unity suffered similar problems, not just with connectivity, but the game simply didn't run properly. Frame rate issues, crashing, bugged quests and other oddities led to a formal apology from Ubisoft's CEO, the cancellation of ACU's season pass and the delivery of a free game for anyone who was unlucky enough to own one.

..."I want to sincerely apologize on behalf of Ubisoft and the entire Assassin's Creed team. These problems took away from your enjoyment of the game and kept many of you from experiencing the game at its fullest potential."...

I realise that publishers have roadmaps and budgets to adhere to, but when a game ships broken, it can cause irreparable damage to a franchise's brand. The damage goes further when you factor in the costs involved in lost sales from bad reviews, and compensation of free games and DLC (like Halo: MCC's free Halo ODST addition).

I'm certainly no game developer, and I cannot sympathise fully with the intricacies of development challenges faced when producing such large and complex products. Regardless, I offer a simple solution to the custodians of every highly anticipated upcoming game.

Do more quality tests. Delay your games as necessary.

I hate to use The Witcher 3: Wild Hunt as another example of game launches done correctly, but CD Projekt RED delayed the hell out of it. As a result, despite minor issues, the end product is largely near perfect. I appreciate the efforts of large development teams and feel inclined to place the blame on publishers for having unrealistic expectations and posting strict deadlines. It seems as though at least EA, Microsoft and Ubisoft have gotten on board. Hopefully the delays of Quantum Break and Tom Clancy's: The Division will yield similar benefits to The Witcher 3.

Quick Time Events

I hate quick time events. I think they're one of the laziest and boring game design aspects of the last decade, and someone needs to kill them. The reason this is in the fore of my mind falls to Mortal Kombat X, which uses the mechanic liberally in its story mode cutscenes.

I might use a none-incidental cut-scene in a game to take a sip of tea or reply to a tweet, but some games insist on making this impossible. Quick time events demand your participation like an attention-starved child, stomping their feet if you dare look away. Mortal Kombat X isn't the worst QTE offender by a long shot. In MKX, time slows down slightly when a QTE rears its ugly head, so at least you don't miss any of the action while you're scanning the screen for the next button mash. Other games aren't so considerate.

Metal Gear Rising's quick time events occasionally took place during highly choreographed and frankly, spectacular cut-scenes. Platinum go to lengths to make sure their QTEs match the combat system, but sometimes they result is an inability to appreciate fully set pieces. You'll be transfixed on the snippet of the screen where random, skill-less whack-a-mole buttons flash, instead of the epic scene unfolding before you.

QTEs aren't always bad. Having limited control over a character that's impaired in some way makes sense. The gruelling crawl sequence through a microwave corridor at the end of Metal Gear Solid 4 is a good example. The half-dead knife fight at the end of Call of Duty: Modern Warfare 2 was also satisfying and climatic.

At their worst, QTEs not only prevent you from properly enjoying a highly choreographed sequence, but they also trivialize the content. The lack of control can rip you from the experience, reminding you that you're not a sexy space marine saving the universe. QTEs at worst just have the opposite intended effect. Far Cry 3 undermined the character development of its antagonists by reducing boss fights to press-X-to-win QTE sequences. Call of Duty: Advanced Warfare's highly ridiculed "press X to pay respects" funeral scene unintentionally came across as both patronising and pretentious in one fell swoop.

I'll leave you with a hilarious glitch from the prolific QTE offender Heavy Rain. (Spoilers)

Micro-transactions

This is my number 1 game design mistake, but again, I don't think they're always bad. As our expectations rise, budgets do in correlation, and additional revenue streams from micro-transactions could lead to better games in theory. More money = better games right? What do you mean I'm naïve?!

This is an argument primarily against poor implementation but also greed.

Micro-transactions are most often used in mobile titles and for good reason. Data suggests that the majority of us ignore the micro-payments, and will decline to sink a single penny into a free to play title. We simply aren't the target audience. The industry chases payments from what it calls 'whales'. These players are likely to spend dozens, maybe hundreds of dollars on micro-transactions in a single title. Some studies show that just 0.15% of mobile gamers contribute to over 50% of mobile game revenue, and those margins are increasingly attractive to 'Triple-A' console devs.

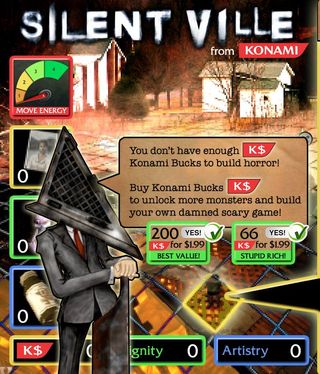

Micro-transactions in completely free to play games seems fair to me, but we increasingly see micro-transactions creep into Triple-A titles that already cost $70. Games like Assassin's Creed Unity have gone as far as to offer micro-payment options in the pause menu like intrusive 90s pop-ups.

Ubisoft defends these micro-transactions as "optional" for the sake of play, but it's a weak rationalization. The simple fact we're exposed to these gameplay circumventing transactions makes them like the pay-per-view ads you get on YouTube. Every time you hit pause you're promptly slapped out of your leisure time and reminded that you're not there to enjoy the game you just paid for, you're just another potential whale to uncaring suits. Marketing coercion should end at the pre-purchase junk these companies peddle. The crime is subjecting us to these marketing practices even after we've put our faith into the price of entry.

It's hard to describe micro-transactions as a mistake when you look at the numbers. For publishers who struggle to compete at a high-end level, devolving into a freemium Zynga-mimic may be the only option. Then why do the most successful publishers like Ubisoft and EA have the gall to ram micro-transactions into fully priced titles? These are companies who boast insane profits even when you remove the revenue generated from micro-payments.

Dragon Age Inquisition's and Battlefield 4's gear-unlocking micro-transactions are buried away in menus, which is at least a compromise. The micro-payment addict will still sniff them out, and I can readily ignore they exist. EA might have learned this lesson from the backlash from Dead Space 3's odious pay-to-build crafting system. I wouldn't be surprised if Ubisoft's flirtation with micro-payment companion apps and gameplay-altering boosters get ditched for Assassin's Creed Syndicate, which is already looking like one big "I'm sorry".

When a game is as fun as it is cheap (or free), like SMITE or TF2, paying a fee for a cosmetic bonus to give back to the devs feels almost righteous. Having the "option" to unlock on-disk content or circumvent hard-coded time sinks in a fully priced title, however, is sickening. Sadly though, it's such an easy revenue stream to implement we're likely to see the practice expand rather than shrink. Big publishers like Konami and Capcom are moving increasingly towards mobile, most likely taking their beloved IP with them. Sonic uses his speed only to chase mobile whales for Sega nowadays.

We can only hope that the practices don't become accepted on console titles. Any fully priced title that brazenly chases us for additional micro-payments will be angrily degraded in any review I do.

Necessary evils?

Ultimately, at least with regards to big publishers, it's their shareholders they listen to rather than you or me. Budget extensions might not be possible on unfinished games. Micro-transactions might be the quickest and easiest way to shore up missed financial targets, and strategies for preventing used game sales will continue until we go completely digital.

It's up to us as gamers to provide our feedback (respectfully) and if necessary, vote with our wallets.

There's a ton of other game design flaws that haven't made this list (it's long winded enough). But I'd be interested to hear what pet peeves you guys have in the comments section below. Thanks for reading.

Jez Corden is a Managing Editor at Windows Central, focusing primarily on all things Xbox and gaming. Jez is known for breaking exclusive news and analysis as relates to the Microsoft ecosystem while being powered by tea. Follow on Twitter @JezCorden and listen to his XB2 Podcast, all about, you guessed it, Xbox!