Details on Xbox streaming console leak, backed by Project xCloud

Details on Microsoft's upcoming streaming Xbox console have leaked, designed for Project xCloud.

Work on Microsoft's next gaming consoles is already underway, with reports suggesting a tiered family of Xbox devices under the Xbox Scarlett codename. While a high-end flagship comparable to Xbox One X is likely, we also expect a low-cost alternative, pairing with the newly-unveiled Project xCloud game-streaming service.

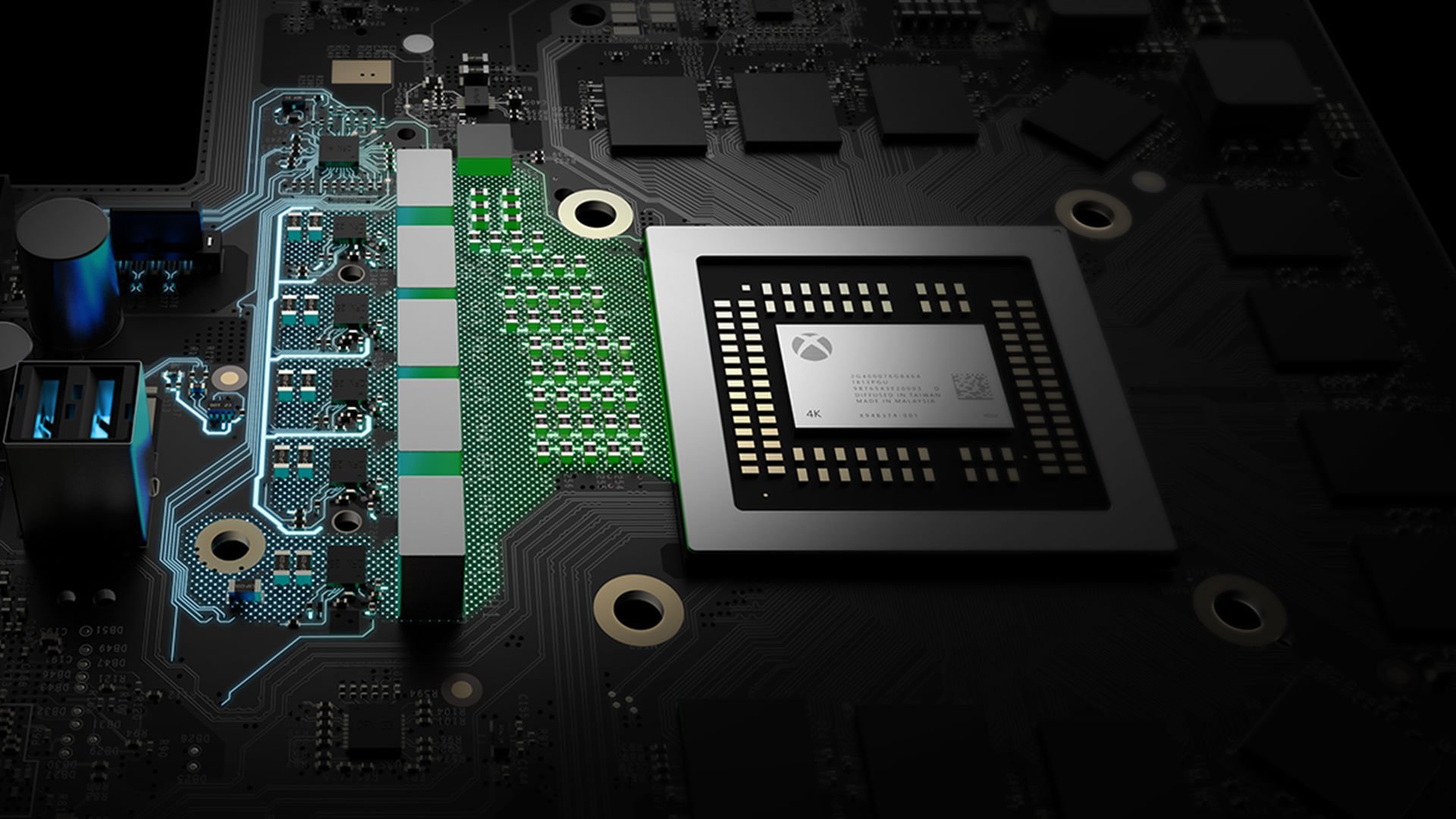

A new report from Wccftech further expands on the rumored cloud-backed device, stating plans to use a semi-custom AMD chipset, based on its Picasso Accelerated Processing Unit (APU) series. Bundling its CPU and GPU into a single die, the chip will ensure a healthy balance of performance and power consumption, aiding a compact form factor. An earlier report also claimed Microsoft plans to use the Picasso series in its next Surface Laptop refresh.

The report also backs our prior Project xCloud coverage, detailing a planned hybridized solution to improve remote play. While latency-sensitive elements of titles would be locally processed, graphics-intensive components would be handed off to the cloud backbone. With the help of deep learning, player actions can be predicted too, aiming to cut latency further.

Project xCloud is shaping up as a critical aspect of Microsoft's future gaming efforts, expected to launch in 2020 or prior. Expanding on its success with Xbox Game Pass, it plans to use xCloud to reach new gamers, banking on its mobile extension.

Xbox Project xCloud game streaming: Everything we know

All the latest news, reviews, and guides for Windows and Xbox diehards.

Matt Brown was formerly a Windows Central's Senior Editor, Xbox & PC, at Future. Following over seven years of professional consumer technology and gaming coverage, he’s focused on the world of Microsoft's gaming efforts. You can follow him on Twitter @mattjbrown.

Windows Central Insider

Windows Central Insider