Why Microsoft must bring sign language recognition to Windows and Cortana

Microsoft's inclusive design efforts must include sign language recognition in Windows 10 and Cortana to support the deaf and hard of hearing community.

Microsoft's inclusive design mission is guiding the company in ensuring its products and services are designed from conception forward with every user of all ability levels in mind. Though the company has made admirable progress in this regard, it is still a work in progress.

Microsoft's Seeing AI app helps people with blindness, Project Fizzyo supports children with Cystic Fibrosis, the Emma Watch and Project Emma aids people with Parkinson's Disease and Microsoft's Immersive Reader helps children with Dyslexia. There are millions of people with varying levels of abilities who are either excluded from interacting with the technologies of modern society or whose physical limitations prevent their full participation in everyday tasks.

Microsoft has embraced the challenge of creating specific solutions, like the tremor-halting Emma Watch, which targets a particular aspect of a disability. It has also incorporated solutions that level the playing field into its technologies, like gaze control in Windows, which enables people with immobility to navigate the OS. Given this integrated solution for people with para- or quadriplegia, a similar OS level solution that enables Windows or Cortana to understand sign language for the 466 million people with disabling hearing loss, in a world where "speaking to AI is becoming the norm" seems like a natural goal for Microsoft. And given that a developer "modified Alexa" to do just that we know that it's also possible.

If Alexa can do it so can Cortana/Windows

Developer Abhishek Singh created a web application that uses a camera to view and understand sign language which is then translated and spoken and heard by Alexa via Amazon's Echo. A typed response is then provided by the system that can be read by the user after Alexa speaks her response.

Using machine learning platform Tensorflow, Singh trained an A.I. to understand American Sign Language and used Google's text-to-speech to translate the signs into spoken words. Singh said, "The project was…inspired by observing a trend among companies of pushing voice-based assistants as a way to create instant, seamless interactions."

Given Microsoft's A.I. and machine learning investments, its Cognitive Services that recognize human faces, activities, speech and more and the role of the camera in Windows PCs for biometrics Microsoft has the end-to-end resources to create a system that can communicate with users who use sign language.

Inclusion is what Microsoft is about

Most companies have some degree of dedication to inclusive design. Microsoft is not unique in that regard.

All the latest news, reviews, and guides for Windows and Xbox diehards.

Microsoft is unique, however, in that its CEO Satya Nadella is personally driven toward inclusion goals due to his experience raising two children with disabilities including his son Zane who has severe Cerebral Palsy. This has led Nadella to promote a pervasive empathy mission throughout Microsoft that imbues its inclusive design efforts with a depth of sincerity, a level of detail and broad scope that makes it stand out in the industry.

Windows now has gaze technology as a result of a hackathon that enabled a former NFL great with Amyotrophic Lateral Sclerosis (ALS) play with his son despite using a wheelchair. Immersive Reader is integrated throughout Microsoft's cross-platform products like OneNote enabling school systems and parents to support children with Dyslexia and other disabilities. Sign language recognition built into Windows/Cortana would be a systemic component of the platform that fits naturally with Microsoft's other efforts.

Including sign language recognition is a must

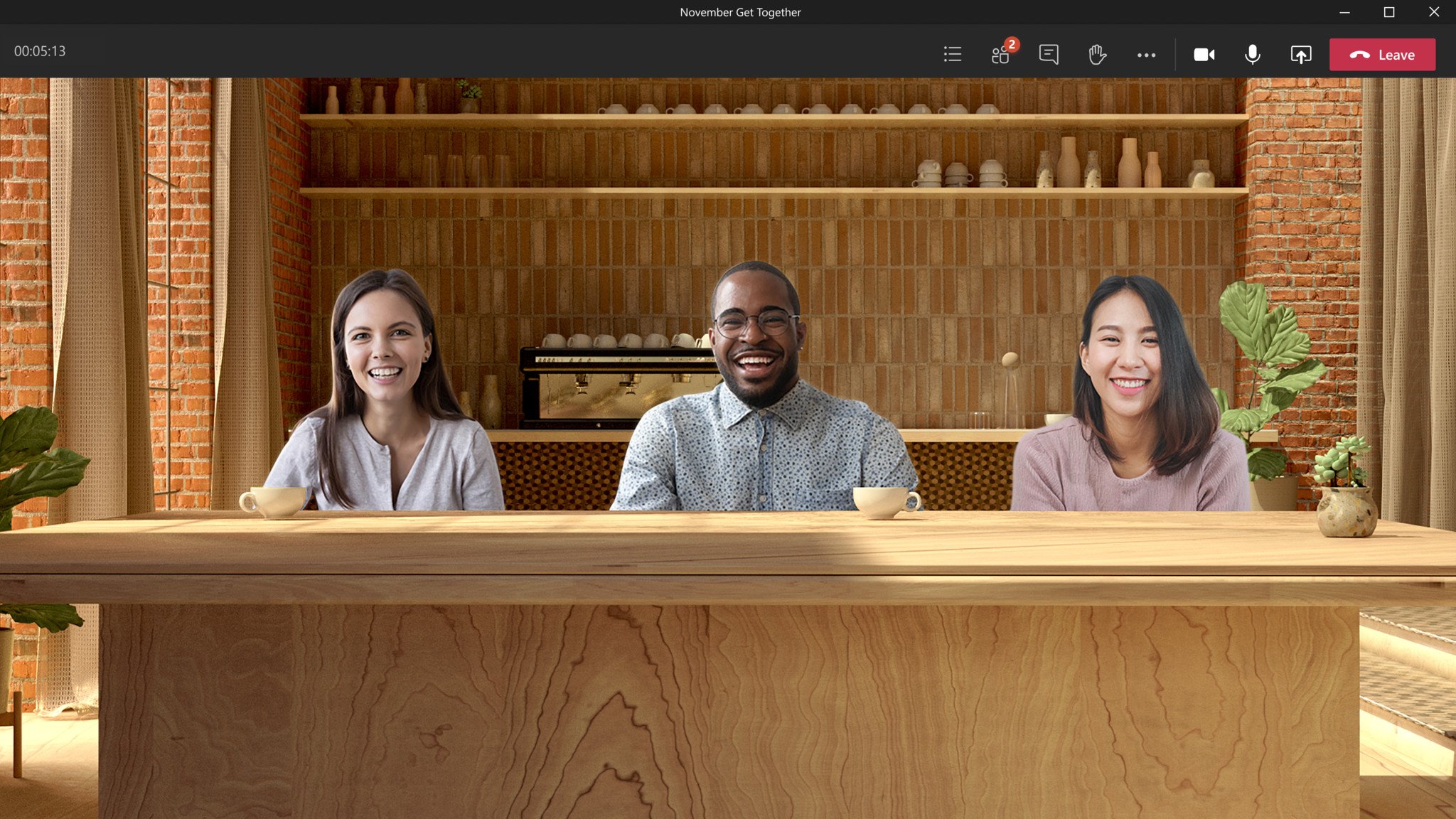

Cortana navigates a meeting with a deaf person in attendance.

If Microsoft's products are meant to be used by everyone then sign language recognition must be a part of the equation. Though the companies digital assistant and smart speaker efforts have paled in comparison to the success rivals are enjoying Microsoft still has a case for making this move.

At build 2018 Microsoft demonstrated Cortana's navigating a meeting, transcribing the conversation, responding to participants and providing text that a deaf/hard of hearing participant could read. A natural progression to such a scenario would be including sign language recognition for people with deafness who do not speak or others who rely on sign language who are unable to speak.

Microsoft's partnership with Amazon by bringing Cortana and Alexa together shows that it is serious about keeping its ambient computing efforts visible in the consumer space. Perhaps bringing sign language recognition to Windows and Cortana could be boosted by similar or joint efforts integrated into Alexa. However it pans out, it just needs to happen. I hope Microsoft agrees.

Jason L Ward is a Former Columnist at Windows Central. He provided a unique big picture analysis of the complex world of Microsoft. Jason takes the small clues and gives you an insightful big picture perspective through storytelling that you won't find *anywhere* else. Seriously, this dude thinks outside the box. Follow him on Twitter at @JLTechWord. He's doing the "write" thing!

Windows Central Insider

Windows Central Insider