How Microsoft's AI platform is giving people with blindness super powers

Microsoft's mission to help individuals and businesses to do more includes bridging the gap between the sighted and the blind.

According to the world health organization there are about 253 million people living with some degree of visual impairment or blindness.

Many of these people receive assistance in the form of a service animal, human aid or technological support. Honing their other senses to levels that may seem to approach super human abilities (to those of us with less acute senses) are another means people with blindness attempt to bridge the gap between themselves and the sighted.

Microsoft and developers have seen the opportunity this dependency on other senses as a means to introduce technology as a bridge between the sighted and the blind. Through Microsoft's AI platform and Cognitive Services other senses are "enhanced" to provide some of the benefits of sight.

AI and Cognitive Services foundation

In recent years Microsoft has made a big deal about AI. CEO Satya Nadella has stressed that AI will be infused in everything Microsoft does. This includes its intelligent Cloud, products and services like Office, Edge and Cortana, billions of IoT devices and more. Microsoft's vision is that ambient computing will be intelligent, percieve our activity, understand us and proactively meet our needs.

Micrsoft's AI everywhere strategy and Cognitive Services are making AI in our image

The company's Cognitive Services which include vision, speech, knowledge, hearing and language API's are critical to imbuing AI systems with human-like "senses."

It is the combination of Microsoft's AI platform and Cognitive Services that has enabled the company and developers to create tools to enable the deaf to "hear", the immobile to move and the blind to "see."

All the latest news, reviews, and guides for Windows and Xbox diehards.

Seeing AI opens eyes

During Microsoft's 2016 Build Developers Conference CEO Satya Nadella introduced Saquib Shaikh, a software engineer at Microsoft. He was presented as the creator of an amazing prototype app that "sees" the world via Microsoft's Vision API's. Shaikh, wearing a pair of dark glasses, demonstrated how AI verbally described the world, activity, text, people and even emotions to the blind. Shaikh, himself is blind.

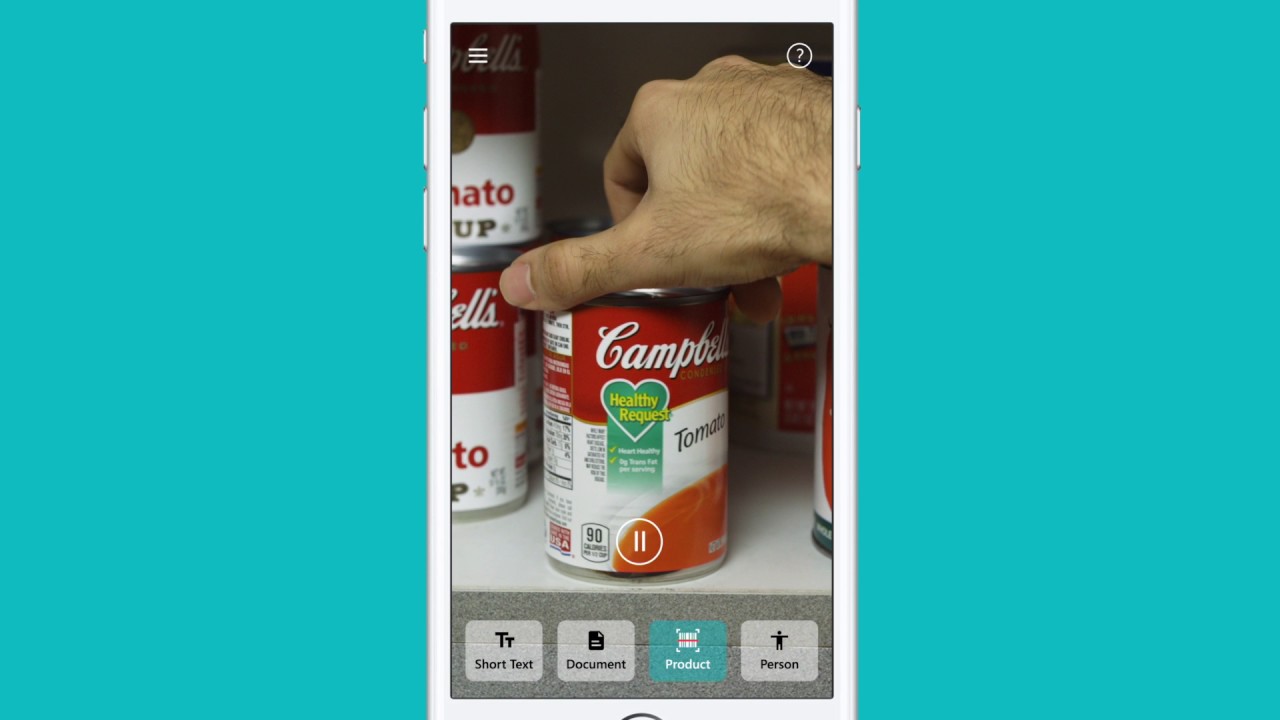

Shaikh's app ultimately became Seeing AI and is currently available on iOS. The app uses the phones camera to identify products like a jar of peanut butter, describe images in other apps, read printed documents and recognize people, their age and emotions.

Seeing AI allows people with blindness to "see" the world in a way they could not see it otherwise. Shaikh's, accomplishment, using Microsoft's AI platform and Cognitive Services is a testament to how inclusive hiring can significantly benefit a segment of the population that is often overlooked.

Soundscape let's blind people explore world

Microsoft Soundscape is a research project, via an app for iOS that allows people with blindness and low vision to explore the world. It is designed to work in the background to provide a user with ambient awareness and can, therefore, be used in conjunction with other apps.

In a nutshell, the app uses 3D audio technology and allows "users to place audio cues and labels in 3D space" so that they sound like they're coming from buildings, roads, points of interest and more in a user's surroundings. Other app features include:

- As you walk, Soundscape will automatically call out the key points of interest, roads and intersections that you pass.

- An audio beacon can be placed on a point of interest, and you will hear it as you move around.

- "My location" describes your current location and the direction you are facing

- "Around me" describes nearby points of interest in each of the four cardinal directions, helping with orientation. (Important for when getting off a bus or leaving a train station.)

- "Ahead of me" describes points of interest in front of you, for example when walking down the street.

Like Seeing AI Soundscape provides individuals with blindness with a level of autonomy they otherwise would not have. And as the user in the above video shares, it also allows them to enjoy their surroundings (because the app tells them what's around them) in a way their sighted friends can.

White Cane on HoloLen's is ambitious

Microsoft's mission is to give people the tools to create technology. Javier Davalos, a Portland-based HoloLens developer, embraced that mission by creating an AR version of the white cane, people who are blind use to help them navigate the world. (Above video)

Davalos' app spatially maps a room. And like a bats sonar, which uses sound to detect objects and food, it uses sound to help a user navigate and understand his environment.

The app uses varying degrees of sound intensity to alert a user to his proximity to an object. It also uses different sounds to identify different types of objects, like a wall or floor.

HoloLens is a long way from being a mainstream consumer product. Thus Davalos' HoloLens-based app won't see widespread use anytime soon. Still, it is a practical application of Microsoft's technology to help bridge the gap between the sighted and the blind.

Seeing a closing gap

Microsoft's AI, Cognitive Services and other technologies have been used to make the world more inclusive. It has helped:

- Deaf people to hear

- People with ALS regain mobility

- Tackle Parkinson's Disease through Project Emma

- Blind children learn to code

With the continued evolution of AI, its ability to understand our world and the creative passions of developers we are seeing a closing gap between what people with blindness and sighted people "see."

Jason L Ward is a Former Columnist at Windows Central. He provided a unique big picture analysis of the complex world of Microsoft. Jason takes the small clues and gives you an insightful big picture perspective through storytelling that you won't find *anywhere* else. Seriously, this dude thinks outside the box. Follow him on Twitter at @JLTechWord. He's doing the "write" thing!

Windows Central Insider

Windows Central Insider