FreeSync vs G-Sync: Which is best for you?

Two kinds of sync can make your PC gaming experience much better. But which one is for you?

The decision boils down to one key choice: AMD or NVIDIA. The best graphics cards from both companies will let you play the latest games at varying levels of detail and resolution, but at their core, they all do the same thing.

When it comes to taking it up a gear, you have FreeSync, and you have G-Sync. Again, similar features, but the former is supported by AMD and the latter by NVIDIA.

If you already made your graphics card choice and you don't plan on switching, the decision is probably already made. But if you're still in the buying process, let's break down a few key factors that can help you decide.

What is FreeSync and G-Sync?

FreeSync allows the graphics card and connected monitor to communicate with one another to maintain a stable refresh rate that can be altered depending on what the graphics card is currently outputting. The result is a stable, super smooth experience and a variable refresh rate. But it's also not supported by all AMD graphics cards, though newer ones will be fine.

G-Sync synchronizes both the monitor and graphics card, thanks to an on-board module inside the display. The card and monitor will only run as fast as the slowest of the two can handle, depending on where the bottleneck happens to be. This means the net benefit is that the monitor will display every single frame produced by the GPU, thus resulting in a super smooth experience.

Ultimately the end result for both is a very similar experience: silky smooth gameplay. The two companies approach it a little differently, but the output is very alike.

The big difference between the two is that NVIDIA G-Sync requires monitors to embed a chip , thus affecting the retail price. We'll look at that more below.

All the latest news, reviews, and guides for Windows and Xbox diehards.

Cost differences: Graphics cards

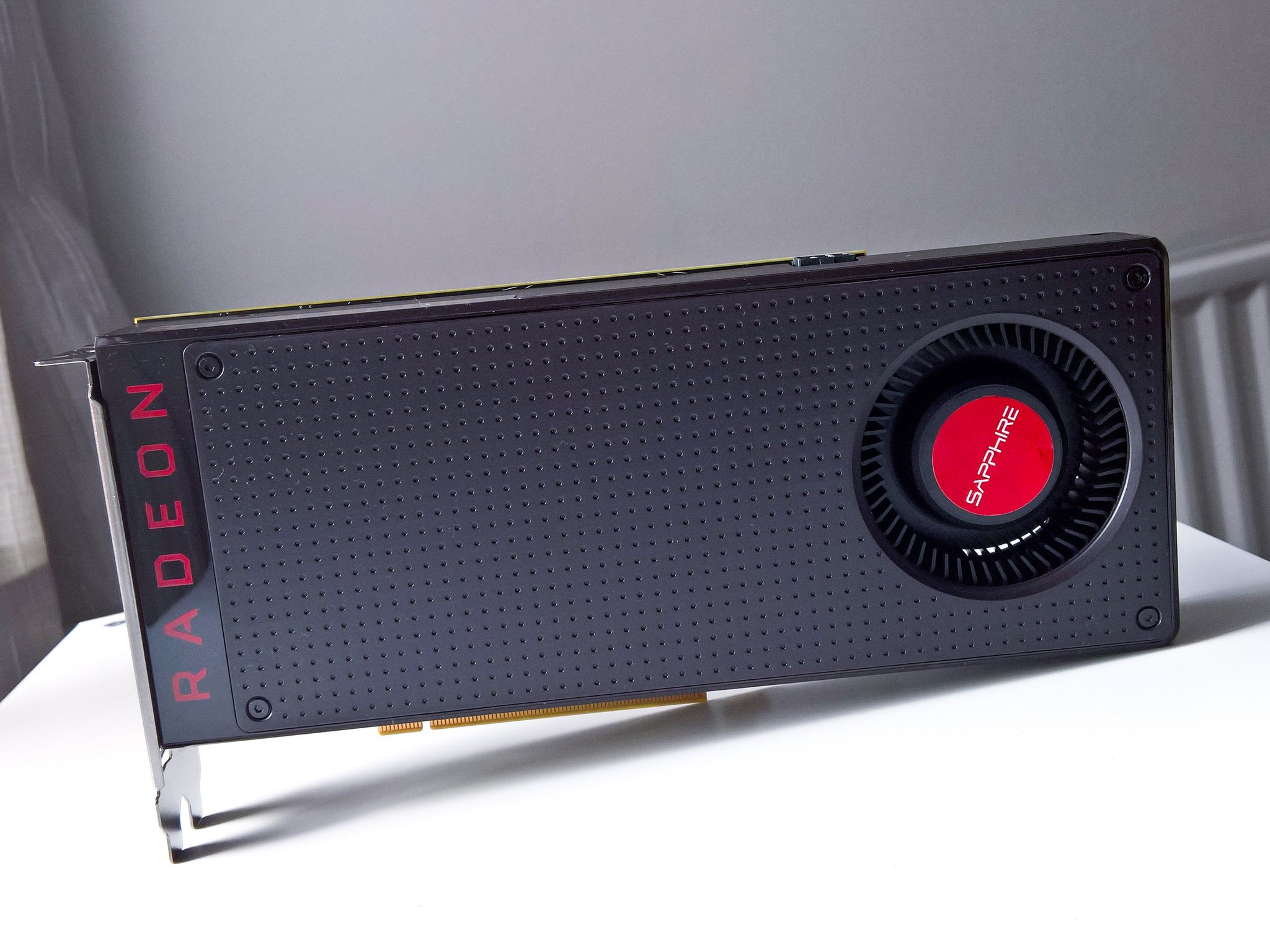

There's an assumption that AMD cards are better for gamers on a budget, and while that's not entirely incorrect, you can't just take it as fact and leave it there. At the time of writing NVIDIA and AMD are targeting different markets. AMD is definitely towards the budget end, with the RX 460 through to the RX 480, while NVIDIA is covering a wider range with the entry GTX 1050 going all the way up to the new GTX 1080 Ti.

That doesn't mean AMDs cards are bad, far from it. The RX 480 is very capable, as is the RX 470. And if you shop around you'll find an 8GB RX 480 for around or not much over $200.

By contrast, the highest end NVIDIA card will cost from $699, though it's far and away more powerful than anything AMD has to offer right now. At a similar price to the AMD cards, you'll find the GTX 1050 Ti and the GTX 1060, both excellent units for 1080p gaming at mid-to-high graphical settings.

AMD has its RX Vega cards coming later in 2017, but right now its highest end card is available for the price of a mid-range NVIDIA one. So if the price is important, going with AMD will save you some dollars.

More: The best graphics cards for gamers

Cost differences: PC monitors

When it comes to choosing a PC monitor, there's no ambiguity like there is with graphics cards: G-Sync panels cost more. There's absolutely nothing anyone can do about that as NVIDIA controls it due to the module it dictates must be present for G-Sync.

FreeSync, by contrast, has a clue in its name. It doesn't generally add to the cost of the monitor. Manufacturers could slip in a little increase, but compared to a G-Sync equivalent the difference will be significant.

For example, you can get FreeSync in even a cheap gaming monitor from folks like Viewsonic for $140. For a G-Sync monitor, you're looking around $400 for something like a 24-inch monitor from Dell. It's the same across the board. The HP Omen 32 we recently reviewed supports FreeSync for $400 on a 32-inch 1440p monitor. There's no way it'd be priced so low if it had G-Sync.

The bottom line

On the whole, the biggest benefit to enjoying FreeSync gaming is cost. As things stand, you'll spend less on a current generation graphics card and less on a PC monitor to use it with. You may sacrifice some ultra power and things like 4K gaming, but you'll have silky smooth, crisp graphics and money in your pocket.

If your gaming tastes are for the bleeding edge, the latest, greatest, most powerful of everything, then you're going to get a NVIDIA card. You're already going to spend more on that, and then you'll spend more on a G-Sync monitor to go with it.

The end experience between the two systems is very similar, but how much you've spent to get there could be very different.

Richard Devine is the Managing Editor at Windows Central, where he combines a deep love for the open-source community with expert-level technical coverage. Whether he’s hunting for the next big project on GitHub, fine-tuning a WSL workflow, or breaking down the latest meta in Call of Duty, Forza, and The Division 2, Richard focuses on making complex tech accessible to every kind of user. If it’s happening in the world of Windows or PC gaming, he’s probably already knee-deep in the code (or the lobbies). Follow him on X and Mastodon.

Windows Central Insider

Windows Central Insider